Structure from Motion

A favorite music video of mine is Holly Herndon’s Chorus, directed by Akihiko Taniguchi. During the video, the camera pans around 3D models of cluttered desks. About half way through, objects start floating around the desks and spinning wildly. It is great.

The models in this video look like they were created using a photogrammetric technique called structure from motion (SfM). The concept of structure from motion photogrammetry is fairly straightforward. Given photographs of an object from many different angles it is possible for an algorithm to construct a 3D model of the object.

I set out to use structure from motion to create a 3D model of my desk as a tribute to the Holly Herndon video. Sadly, the lighting in my apartment was too uneven to create a good result. Instead, I cycled around my neighborhood to look for a new subject. I ended up choosing an obelisk-ish stone object (I think at one point it may have held a plaque) on a path near the Elbow River.

How does it work?

If you are curious about the theory of structure from motion, Schonberger and Frahm’s 2016 paper (pdf) has a step-by-step summary of the pipeline. The algorithm consists of two main sections:

- Correspondence Search: First, features are identified in the input photographs using a descriptor such as SIFT. The identified features in each image are compared to each other image to determine which photographs overlap.

- Incremental Reconstruction: During this phase, a model is initialized and new images are added incrementally. Camera position is determined by solving the perspective-n-point problem and new point locations are added via triangulation. After each iteration a bundle adjustment step is performed to refine the model and reduce noise.

Open source workflow

There are plenty of excellent photogrammetry tutorials on the internet. If you don’t have a programming background, don’t despair. There is no requirement to understand any algorithms or write any code. I followed along with a blog post by Jesse Spielman and a video tutorial by Phil Nolan while building my model.

Commercial software photogrammetry exists, but it is possible to build a great model using a completely free stack. For this model I used:

- Darktable - photo editing

- VisualSfM - structure from motion

- Meshlab - point cloud preprocessing, surface reconstruction, and texturing

- Sketchfab - postprocessing and sharing

As long as you have taken adequate input photos and chosen an appropriate subject then VisualSfM will handle all of the structure for motion heavy lifting with a few button clicks.

Meshlab has built in tools for all of the surface reconstruction and texturing steps in the process required after SfM. Getting the correct sequence of preprocessing tools and reconstruction parameters takes a bit of trial and error.

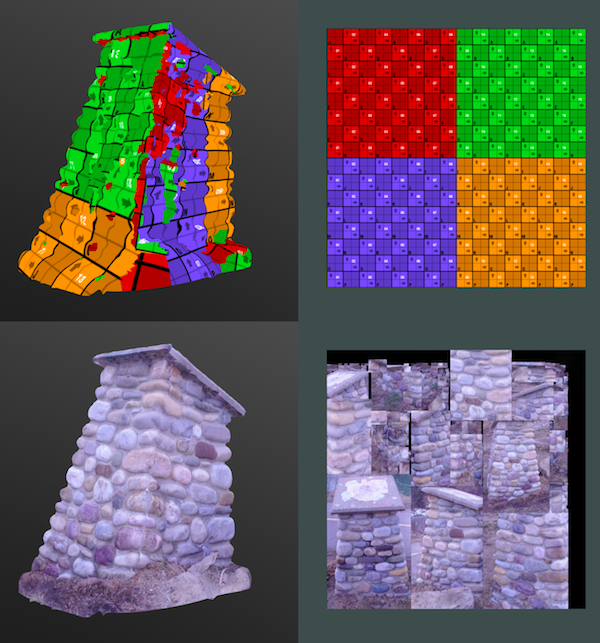

Below are a few screenshots and videos I captured during the process:

Wrap up

I didn’t have immediate success with this photogrammetry project. The first handful of models I tried failed, sometimes horribly. It took consistently lit input photos and stumbling on the right meshing parameters to get a result I was happy with. There are a few things I wish I knew when I got started:

- Taking good input photos and picking an appropriate subject is key

- Make sure to experiment with the parameters in the meshing step, especially minimum number of samples if your data is noisy

- Read and watch plenty of tutorials before diving head first into the process. There is an active photogrammetry hobbyist scene and lots of resources out there

Structure from motion is a sophisticated computer vision algorithm with a lot going on under the hood. I didn’t dig deep, but I tried to get a base understanding of what is going on. To follow up on this project, I am planning on circling back to learn more about computer vision fundamentals.